- Category name, synonyms and definition: [Categories]

- Tracklet captions:

- MOT17 subset: [Train annotations], [Sub-optimal test annotations]

- TAO subset: [Train annotations], [Val annotations]

- Object retrieval:

- TAO subset: Under assessment

Statistics

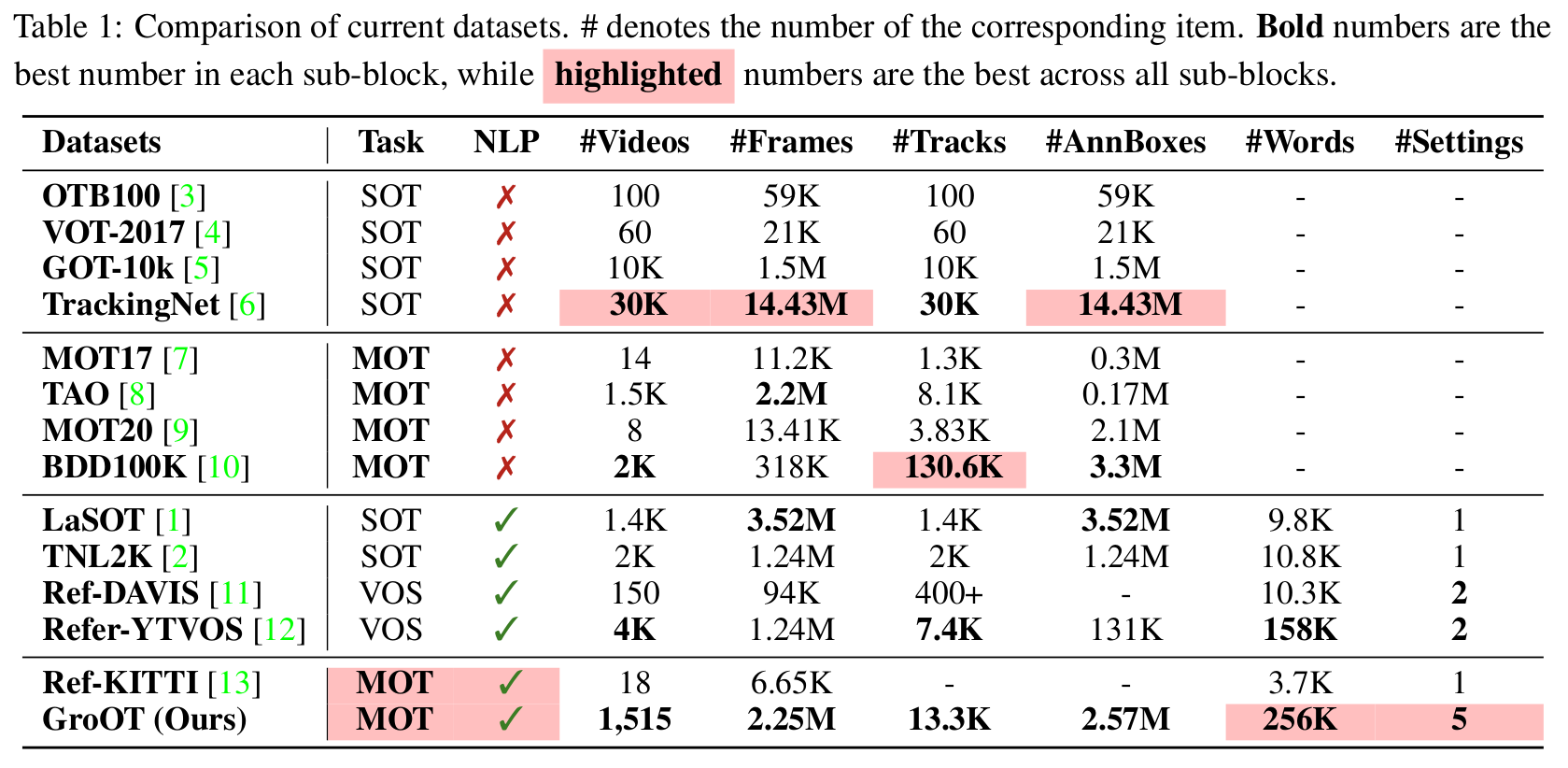

One of the recent trends in vision problems is to use natural language captions to describe the objects of interest. This approach can overcome some limitations of traditional methods that rely on bounding boxes or category annotations. This paper introduces a novel paradigm for Multiple Object Tracking called Type-to-Track, which allows users to track objects in videos by typing natural language descriptions. We present a new dataset for that Grounded Multiple Object Tracking task, called GroOT, that contains videos with various types of objects and their corresponding textual captions describing their appearance and action in detail. Additionally, we introduce two new evaluation protocols and formulate evaluation metrics specifically for this task. We develop a new efficient method that models a transformer-based eMbed-ENcoDE-extRact framework (MENDER) using the third-order tensor decomposition. The experiments in five scenarios show that our MENDER approach outperforms another two-stage design in terms of accuracy and efficiency, up to 14.7% accuracy and 4× speed faster.

GroOT contains videos with various types of objects and their corresponding textual captions of 256K words describing their appearance and action in detail. To cover a diverse range of scenes, GroOT was created using official videos and bounding box annotations from the MOT17, TAO and MOT20.

| 1.52K videos | 13.3K tracks | 256K words |

|---|

Here are examples of what's annotated on videos of the GroOT dataset:

@article{nguyen2023type,

title = {Type-to-Track: Retrieve Any Object via Prompt-based Tracking},

author = {Nguyen, Pha and Quach, Kha Gia and Kitani, Kris and Luu, Khoa},

journal = {Advances in Neural Information Processing Systems},

year = 2023

}[1] Kha Gia Quach, Pha Nguyen, Huu Le, Thanh-Dat Truong, Chi Nhan Duong, Minh-Triet Tran, and Khoa Luu. "DyGLIP: A dynamic graph model with link prediction for accurate multi-camera multiple object tracking." In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021. [paper, code]

[2] Pha Nguyen, Kha Gia Quach, Chi Nhan Duong, Ngan Le, Xuan-Bac Nguyen, and Khoa Luu. "Multi-camera multiple 3d object tracking on the move for autonomous vehicles." In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, 2022. [paper]

[3] Pha Nguyen, Kha Gia Quach, Chi Nhan Duong, Son Lam Phung, Ngan Le, and Khoa Luu. "Multi-Camera Multi-Object Tracking on the Move via Single-Stage Global Association Approach." Under review. [paper]

[4] Pha Nguyen, Kha Gia Quach, John Gauch, Samee U. Khan, Bhiksha Raj, and Khoa Luu. "UTOPIA: Unconstrained Tracking Objects without Preliminary Examination via Cross-Domain Adaptation." Under review. [paper]

[5] Kha Gia Quach, Huu Le, Pha Nguyen, Chi Nhan Duong, Tien Dai Bui, and Khoa Luu. "Depth Perspective-aware Multiple Object Tracking." Under review. [paper]

|

|

|

|

|---|